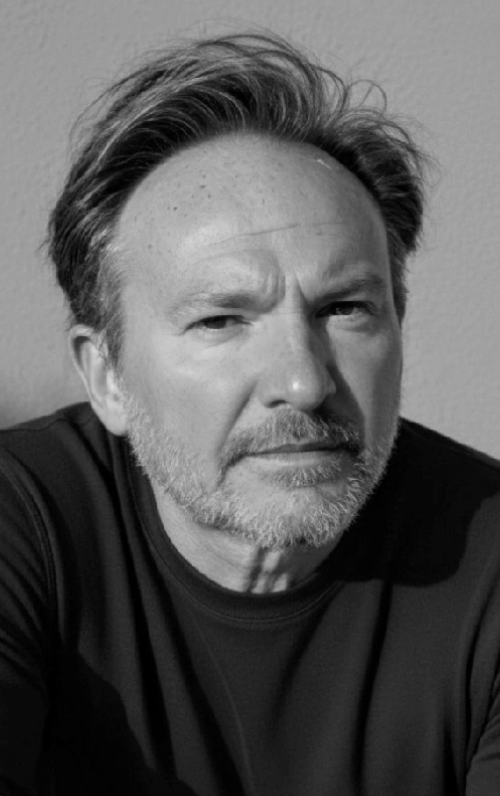

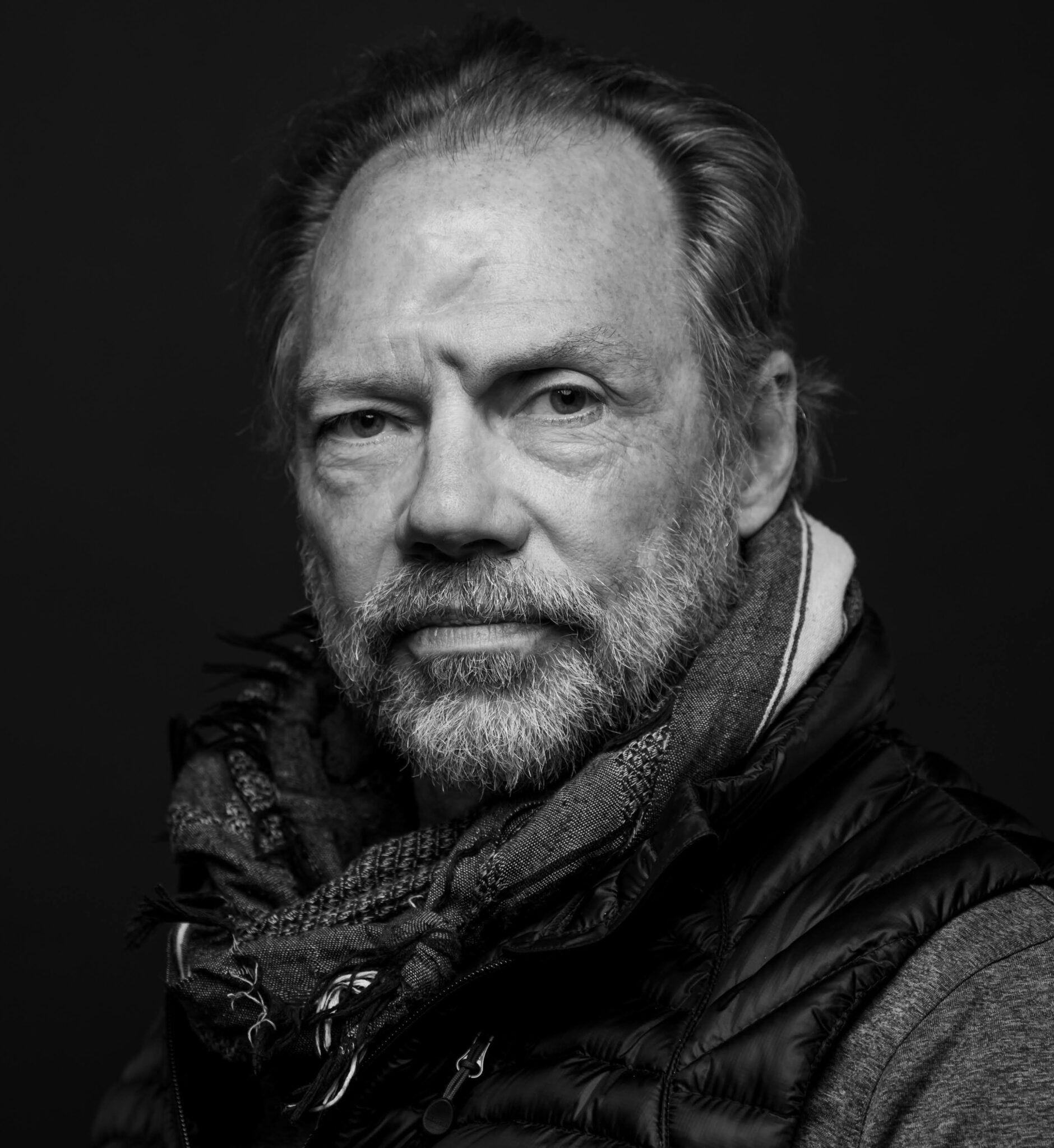

In the summer of 2016, then-Director of National Intelligence Lt. Gen. James Clapper began to detect a worrisome trend. It was an election year, and he was starting to see an uptick in Russian activity around misinformation and disinformation that went well beyond the usual playbook. Russia had been known to carry out activities using disinformation ahead of previous US elections so it wasn't shocking at first. The then-DNI and other leaders within the Intelligence Community expected a certain amount of ambient Russian activity. But not like what Clapper was seeing starting to play out.

“Russia has a long history of interfering in their own and others’ elections, but never on a scale, aggressiveness, or breadth of scope as they did in our 2016 election,” Clapper told The Cipher Brief last week.

Clapper wrote extensively about what he saw in his 2018 book, Facts and Fears: Hard Truths from a Life in Intelligence, including how Russian hackers began employing tactics around social media and how quickly they were spreading across the accounts of US persons, which introduced a problem for the IC. “The reason we were slow on the uptake in that is that there is a reticence about monitoring U.S. communications, even if they’re publicly available,” Clapper told us.

He still had the details of Edward Snowden’s theft and leaking of intelligence secrets in 2013 fresh in his mind and the clear understanding of how messages about what the government was actually doing, and under what authority, could be easily lost in the media noise.

“After being burnt by Snowden on that sort of thing, I wasn’t as aggressive as I should have been in pushing the community to pay attention to what was going on in social media,” said Clapper.

What Clapper was detecting in 2016 and what it is today, has been fueled by another growing phenomenon of what a recent RAND study refers to as ‘truth decay’: the diminishing role that facts and analysis have played in America – not just over the course of one presidential administration – but over the last two decades. RAND defines ‘truth decay’ as an increasing disagreement over facts that tends to blur lines between what is someone’s opinion and what is fact and how opinion now seems to have more influence that fact in American society. The downside is that the result has been a decline in trusting what were long considered ‘trusted sources’.

“There is a phenomenon where we disregard facts, empirical data, and objective analysis, and this is only proliferated by social media where people live in different reality bubbles,” Clapper told us. “This problem is not going to be able to be solved by a whole of government approach, it needs a whole of society effort.”

What would the result of that whole-of-society effort look like? Clapper has an idea about that. “The basic format may be modeled after intelligence community products. The PDB [Presidential Daily Brief] could be one format. That would be a one-page article and would certainly be useful. I also think more in-depth products like the NIE [National Intelligence Assessment] would provide more depth. Overall, I would model these products after what the community produces now and there are a variety of formats and templates that could be used for that.”

Let’s talk about it. Read the Background Brief below and then join us Wednesday, February 24 at 1:30p as we get a briefing from Lt. Gen. Clapper on why something like this is needed in today’s intelligence community and what’s at risk if we don’t get this right. Members receive registration links via email. Not a member? That's an easy fix.