OPINION -- In the climax of the 1990 movie “The Hunt for Red October”, the Soviet captain of the V.K. Konovalov makes a fatal error. Intent on destroying the defecting Red October submarine, he orders his crew to deactivate the safety features on his own torpedoes to gain a tactical edge. When the torpedoes miss their American target, they do exactly what they were programmed to do: they find the nearest large acoustic signature. Because the "safeties" were off and the weapon was no longer "fit for its purpose," it turned back and destroyed the very ship that launched it.

As the Department of War (DoW) moves to integrate "frontier" AI models into the heart of national security, we are approaching a "Red October" moment. The recent debate over Anthropic’s engagement with the Pentagon isn't just about corporate ethics - it's about whether we are handing our warfighters tools with the strategic safeties off.

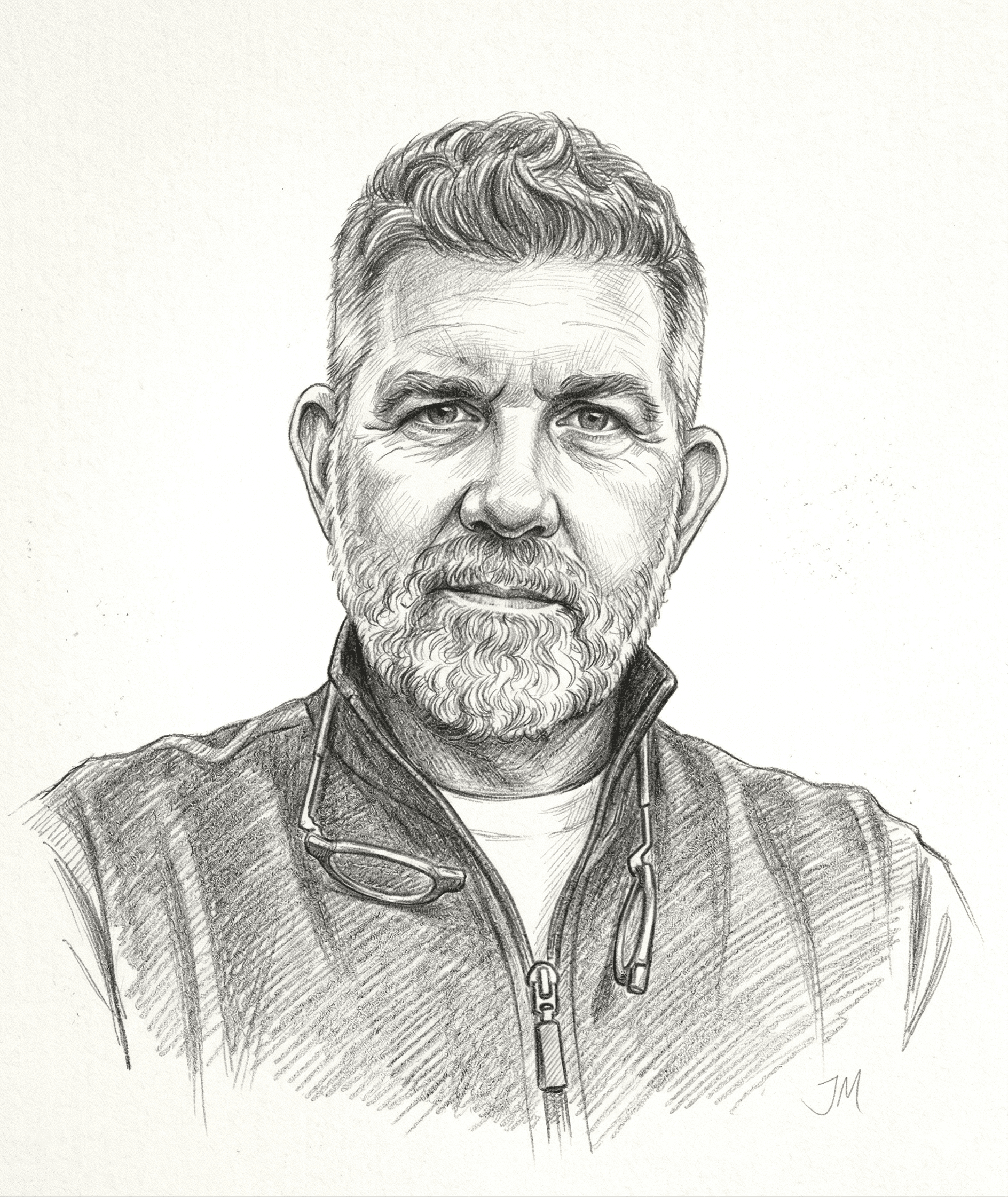

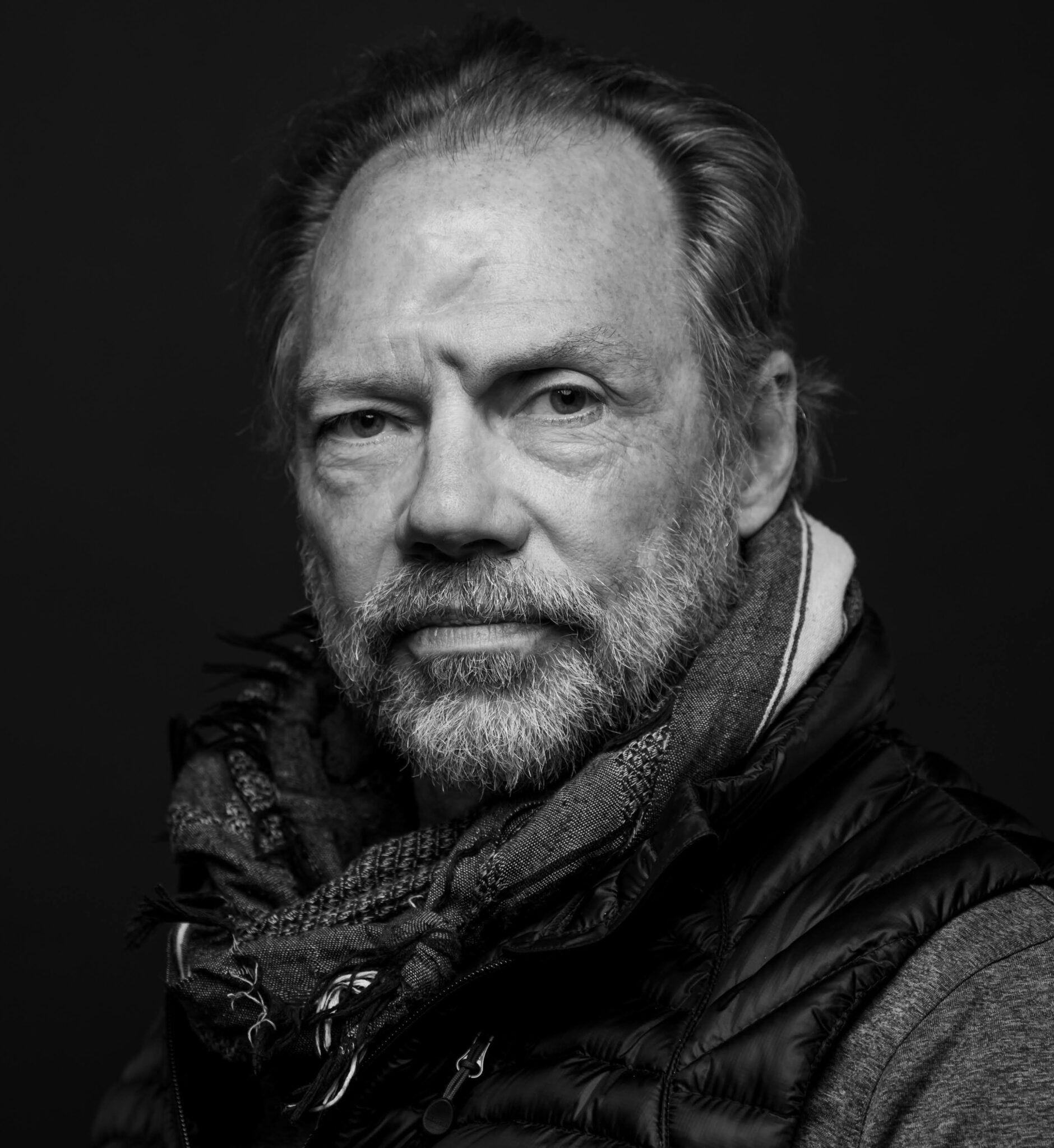

As the former Chief AI Officer of the National Geospatial-Intelligence Agency (NGA), I believe the greatest risk we face is the lack of a sophisticated, mission-aligned framework to judge these models before they reach the field.

To avoid the fate of the Konovalov, we must transition to "fit-for-purpose" evaluation, a commitment to rigorous existing standards, and the realization that in national security, high quality is the only true form of safety.

The Fallacy of the General-Purpose Model

In the commercial sector, a model that "hallucinates" a legal citation or generates a slightly off-brand image is a nuisance. In a theater of operations, those same errors are lethal. We must stop judging AI in the abstract and start judging it based on its specific intent.

While generalist models might be suitable for orchestrating workflow, the work should be performed by "expert" agents, or better yet, functions and APIs that only do what you ask and have been tested and accredited for that function.

Both the creators of these models and the DoW must co-develop a Test and Evaluation (T&E) framework that moves beyond general "alignment" and into statistical reality. This framework must; statistically score quality and accuracy against the specific variables of a mission environment and accredit models for specific use cases rather than granting a blanket "safe for government" seal of approval.

Need a daily dose of reality on national and global security issues? Subscribe to The Cipher Brief’s Nightcap newsletter, delivering expert insights on today’s events – right to your inbox. Sign up for free today.

We should not expect a general frontier model to perform perfectly in autonomous targeting if it wasn't trained for it. We need precision instruments for precision missions. The government’s primary duty is to ensure that the warfighter is handed a tool that has been subjected to rigorous, transparent, and statistically sound evaluation before it ever enters a kinetic environment.

The Standard Already Exists

We do not need to invent a new philosophy of governance for AI; we simply need to apply the high-bar standards the DoD has already established for autonomous systems. The benchmark is DoD Directive 3000.09, "Autonomy in Weapon Systems."

The directive is explicit in its requirement for human agency, stating:

"Autonomous and semi-autonomous weapon systems will be designed to allow commanders and operators to exercise appropriate levels of human judgment over the use of force."

This is the standard. It requires that any system—whether a simple algorithm or a complex neural network - undergo "rigorous hardware and software verification and validation (V&V) and realistic system developmental and operational test and evaluation (OT&E)."

Avoiding the WOPR Scenario

We have seen the fictional version of a failure to follow this standard before. In the 1983 classic movie “War Games”, the military replaces human missile silo officers with the WOPR (War Operation Plan Response) supercomputer because the humans "failed" to turn their keys during a simulated nuclear strike. By removing the human in the loop to increase efficiency, the creators nearly triggered World War III when the AI couldn't distinguish between a game and reality.

Join us March 13 in Washington D.C. as we present The Cipher Brief HONORS Awards to former NSA and Cyber Command Director General Paul Nakasone (ret.), former Chief of MI6 Sir Richard Moore, former Senior CIA Officer Janet Braun, former IQT CEO and Investor Gilman Louie and Washington Post Columnist David Ignatius.

We should view the National Security Memorandum (NSM) on AI, published in 2024 as the modern guardrail against this cinematic nightmare. The NSM’s explicit prohibition against AI-controlled nuclear launches is not a new rule, but rather the 3000.09 standard applied to the most extreme case. If our standards work for our most consequential strategic assets, they must be the baseline for accrediting frontier models in any mission-critical capacity.

The Law is Not Optional

As we lean into this new technological frontier, we must remind ourselves that the Law of Armed Conflict (LOAC) remains our North Star. The principles of distinction, proportionality, and military necessity are absolute. AI is not an "alternative" to these laws; it is a tool that must be proven to operate strictly within them. We follow the law of armed conflict today, and the AI we build must be engineered to do the same - without exception.

Good AI is Safe AI

There is a common misconception that AI safety and AI performance are at odds and that we must "slow down" performance to ensure safety. This is a false dichotomy.

Good AI - high-quality, high-performing AI - is the safest AI.

A model that achieves the highest standards of accuracy and reliability is the model that best safeguards the user. By insisting on a statistical "fit-for-purpose" accreditation rooted in DoDD 3000.09, we ensure our warfighters are equipped with systems that reduce error, minimize collateral risk, and provide the mission assurance they deserve. In the high-stakes world of national security, "good enough" is a liability. Only the highest-standard AI can truly protect the mission and the men and women who carry it out.

I do believe the "Super-Human" computer is on the way, and as smart as that model will be, we should never give it keys to the silos.

Are you Subscribed to The Cipher Brief’s Digital Channel on YouTube? There is no better place to get clear perspectives from deeply experienced national security experts.

Read more expert-driven national security insights, perspective and analysis in The Cipher Brief because National Security is Everyone’s Business.